Thursday, 23 January 2025

Qt in 2024

2024 was another outstanding year for Qt, filled with exciting milestones and achievements! Highlights of the year include the Qt 6.7 and Qt 6.8 releases, Qt Creator 15 release, and the Qt Contributor Summit.

Qt in 2024

2024 was another outstanding year for Qt, filled with exciting milestones and achievements! Highlights of the year include the Qt 6.7 and Qt 6.8 releases, Qt Creator 15 release, and the Qt Contributor Summit.

Ruqola 2.4.1 is a feature and bugfix release of the Rocket.chat messenger app.

URL: https://download.kde.org/stable/ruqola/

Source: ruqola-2.4.1.tar.xz

SHA256: e5adb0806e12b4ce44b55434256139656546db9f5b8d78ccafae07db0ce70570

Signed by: E0A3EB202F8E57528E13E72FD7574483BB57B18D Jonathan Riddell jr@jriddell.org

https://jriddell.org/jriddell.pgp

One of my leisure time activities is to develop KMyMoney, a personal finance management application. Most of my time is spent on development, testing, bug reproduction and fixing, user support and sometimes I even write some documentation for this application. And of course, I use it myself on a more or less daily basis.

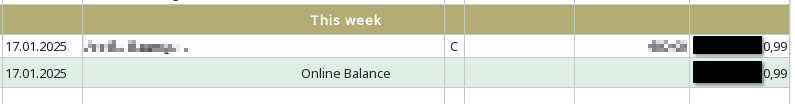

One of the nice KMyMoney features that helps me a lot is the online transaction download. It’s cool, if you simply fire up your computer in the morning, start KMyMoney, select the “Account/Update all” function, fill in the passwords to your bank and Paypal accounts when asked (though also that is mostly automated using a local GPG protected password store) and see the data coming in. After about a minute I have an overview what happened in the last 24 hours on my accounts. No paper statement needed, so one could say, heavily digitalized. At this point, many thanks go out to the author of AqBanking which does all the heavy work dealing with bank’s protocols under the hood. But a picture is worth a thousand words. See for yourself how this looks like:

The process is working for a long time and I have not touched any of the software parts lately. Today, I noticed a strange thing happening because one of my accounts showed me a difference between the account balance on file and the amount provided by the bank after a download. This may happen, if you enter transactions manually but since I only download them from the bank, there should not be any difference at all. Plus, today is Sunday while on the day before everything was just fine. First thought: which corner case did I hit that KMyMoney is behaving this way and where is the bug?

First thing I usually do in this case is to just close the application and start afresh. No way: same result. Then I remembered, that I added a feature the day before to the QIF importer which also included a small change in the general statement reader code. Of course, I tested things with the QIF importer but not with AqBanking. Maybe, some error creeped into the code and causes this problem. I double checked the code and since it dealt with tags – which are certainly not provided by my bank – it could not be the cause of it.

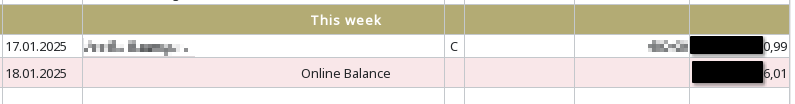

So I looked at the screen again:

New data must have been received because the date in the left column changed and also the amount of the colored row changed but not the one in the row above which still shows the previous state. The color is determined by comparing the balance information with the one in the row above. So where is/where are the missing transaction(s)?

Long story short: looking at the logs I noticed, that the online balance was transmitted but there was no transaction at all submitted by the bank. And if I simply take the difference between the two balances it comes down to a reimbursement payment which I expect to receive.

Conclusion: no bug in KMyMoney, but the bank simply provided inconsistent data. Arrrrgh.

You might have seen the awesome Klassy theme by Paul McAuley for Qt applications and window decorations for KWin.

It has some issues compiling against the latest Plasma since the KDecoration API break.

Until it is fixed in the main repository, I’ve created a temporary fork that includes the port to KDecoration3 done by Eliza Mason, with a tiny additional fix I added on top of it. The fork is available at github.com/ivan-cukic/wip-klassy

The kdesrc-build recipe for it is:

module klassy

repository https://github.com/ivan-cukic/wip-klassy

cmake-options \

-DBUILD_QT5=OFF \

-DBUILD_QT6=ON

branch plasma6.3

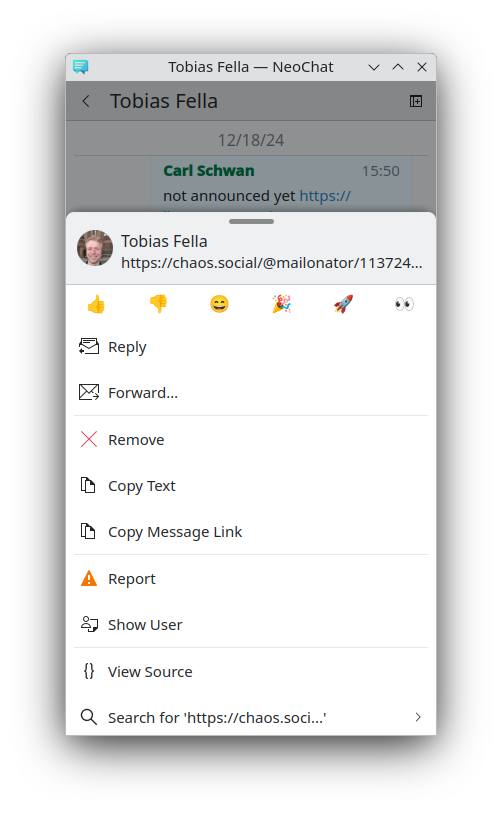

end moduleKirigami Addons is a collection of additional components for Kirigami applications. 1.7.0 is a relatively big release bringing a new convergent component for context menus as well as various quality of life APIs to existing components.

This release bring a new component which wraps the tradional context menu

Controls.Menu provided by Qt and on mobile will instead displays a BottomDrawer

with the list of actions.

Using it, is really easy:

import QtQuick.Controls as Controls

import org.kde.kirigami as Kirigami

import org.kde.kirigamiaddons.components as Components

import org.kde.kirigamiaddons.formcard as FormCard

Components.ConvergentContextMenu {

id: root

// Only visible on mobile to show a bit of information about the selected element

headerContentItem: RowLayout {

spacing: Kirigami.Units.smallSpacing

Kirigami.Avatar { ... }

Kirigami.Heading {

level: 2

text: "Room Name"

}

}

Controls.Action {

text: i18nc("@action:inmenu", "Simple Action")

}

Kirigami.Action {

text: i18nc("@action:inmenu", "Nested Action")

Controls.Action { ... }

Controls.Action { ... }

Controls.Action { ... }

}

Kirigami.Action {

text: i18nc("@action:inmenu", "Nested Action with Multiple Choices")

Kirigami.Action {

text: i18nc("@action:inmenu", "Follow Global Settings")

checkable: true

autoExclusive: true // Since KF 6.10

}

Kirigami.Action {

text: i18nc("@action:inmenu", "Enabled")

checkable: true

autoExclusive: true // Since KF 6.10

}

Kirigami.Action {

text: i18nc("@action:inmenu", "Disabled")

checkable: true

autoExclusive: true // Since KF 6.10

}

}

// custom FormCard delegate only supported on mobile

Kirigami.Action {

visible: Kirigami.Settings.isMobile

displayComponent: FormCard.FormButtonDelegate { ... }

}

}

Kirigami Addons components are using some breeze icons which needs to be packaged

manually on android by calling kirigami_package_breeze_icons with the icons used.

Now Kirigami Addons, provides a Cmake variable KIRIGAMI_ADDONS_ICONS listing all

the icons used by Kirigami Addons, simplifying the maintainance work of applications

to keep the list of icons used up-to-date.

kirigami_package_breeze_icons(ICONS

${KIRIGAMI_ADDONS_ICONS}

// own icons

...

)

Kirigami Addons’ shortcut editor can now be embedded in normal ConfigurationView via a new ConfigurationModule: ShortcutsConfigurationModule.

import org.kde.kirigamiaddons.settings as KirigamiSettings

KirigamiSettings.ConfigurationView {

id: root

required property TokodonApplication application

modules: [

...

KirigamiSettings.ShortcutsConfigurationModule {

application: root.application

},

]

}

The FormCardPage now uses a slighly less grey to get more contrasts with the sidebar.

We cleaned up FormComboBoxDelegate to not relly on the applicationWindow()

hack from Kirigami anymore. This fixes using FormComboBoxDelegate in Plasma

Settings. Unfortunately some areas of Kirigami Addons still implicitely rely on

applicationWindow() to set the parent of popups (Kirigami has a similar

issue). If you are using dialogs or popup in your code, make sure to

explicitely pass a valid Controls.Overlay.overlay as parent to them instead

of rellying on applicationWindow() being valid all the time.

The FormCard.AboutPage now show the KDE Frameworks version in use rather than

the one we built against. We are also using in the AboutKDEPage component,

the same bug address as in the AboutPage component. And we fixed various other small

issues with the about pages. Thanks Volker and Joshua!

We now use clang-format automatically and various clang-tidy warnings were fixed. Thanks Alex!

Avatar are now loaded asynchronously which should make NeoChat, Tokodon and Merkuro list views smoother. Thank Kai! Addionally the text fallback is now only rendered as plain text, which should also be sligly faster.

The RadioSelector now uses the style from Marknote.

We updated the templates provided by Kirigami Addons to the latest version of the flatpak

runtimes and some other minor improvements like using the new KLocalizedQmlContext.

AlbumMaximizeComponent now expose not only the currentItem from the internal

view, but also the currentIndex.

The IndicatorItemDelegate and RoundedItemDelegate components are now easier to use with drag and drop interaction. You can see that in effect in last week update of Merkuro Mail.

MessageDialog now behaves better on mobile.

You can find the package on download.kde.org and it has been signed with my GPG key.

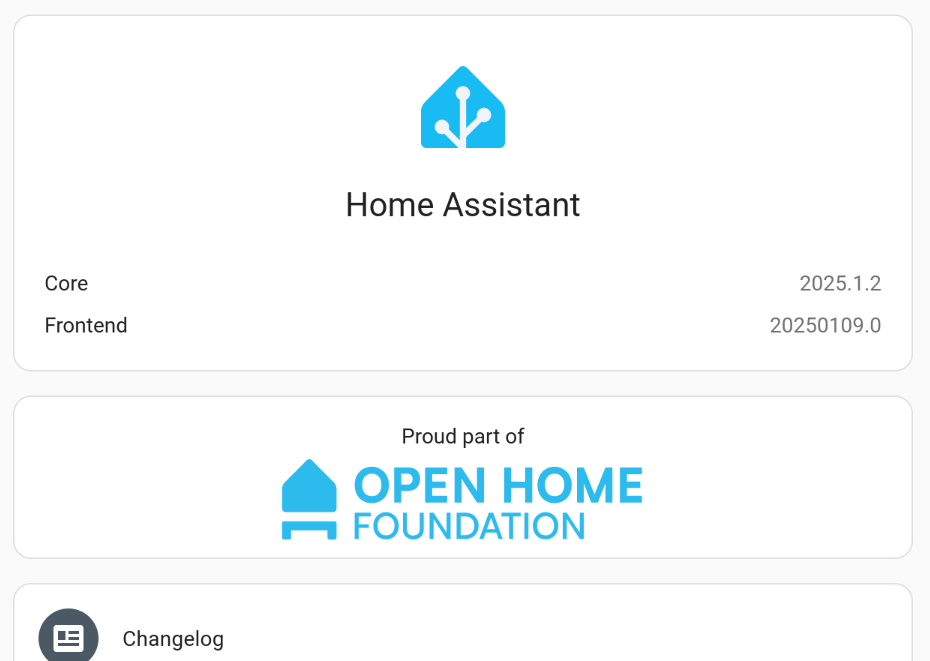

I run Home Assistant Core on a Raspberry Pi. I installed it in a Python venv and now and then I feel a need to upgrade. Today was such a day.

So, having backed everything up, I went for the plunge. Let’s install version 2025.1.2.

The usual dance goes a bit like this:

sudo systemctl stop homeassistant

sudo su homeassistant

cd /opt/homeassistant

source bin/activate

pip install --upgrade homeassistant

exit

sudo systemctl start homeassistantThen all the dependencies are installed, so I usually go for a coffee, and once things have settled down (I use top to check that the system is idle), I usually restart homeassistance, just to make sure that it stops and starts nicely.

This time, I had no such luck. Lots of little issues. The major one seemed to be that import av in one of the core modules suffered from some sort of ValueError exception.

Having duckducked the issue for a while, I realized this meant that I had to do the upgrade from Python 3.12 to 3.13. Upgrading va to version 14.x using pip does not help. Since I always forget how to do this, I’m now writing this blog post.

Recollecting the steps, the moves are, more or less these:

sudo apt-get install python3.13 python3.13-venv python3.13-dev

sudo systemctl stop homeassistant

sudo su homeassistant

cd /opt/homeassistant

mkdir old

mv bin/ cache/ include/ lib/ lib64 LICENSE pyvenv.cfg share/ old

python3.13 -m venv .

source bin/activate

pip install homeassistant

exit

sudo systemctl start homeassistantAgain, restarting Home Assistant takes a while and a bit more since all the dependencies are built. Go grab a snack or just a quiet coffee and, viola, you will end up with a fresh install of Home Assistant version 2025.1.2

Tellico 4.1 is available, with some improvements and bug fixes. This release and any subsequent bugfix dot releases (such as 4.1.1) will be the last ones that build with Qt5.

If you’re old enough, you probably remember that there was a meme from the 4.x days is that Plasma is all about clocks.

I’ve started working on some new artwork, and ended up sidetracked spending more time designing fun clocks for Plasma than on what I planned to work on, proving there’s some truth to the meme.

These are based on one of the coolest watch designs I’ve seen in recent years – a Raketa Avant Garde:

In the latest Plasma 6.3 Beta, you will find a new executable named kcursorgen in /usr/bin. It can convert an SVG cursor theme to the XCursor format, in any sizes you like. Although this tool is intended for internal use in future Plasma versions, there are a few tricks you can play now with it and an SVG cursor theme.

(Unfortunately, the only theme with the support that I know, besides Breeze, is Catppuccin. I have this little script that might help you convert more cursor themes.)

qt6-svg library.xcursorgen command, usually found in xorg-xcursorgen package.You should be able to set any cursor size with SVG cursors, right? Well, not at the moment, because:

But we can do it manually with kcursorgen. Take Breeze for example:

First, copy the cursor theme to your home directory. And let's change the directory name, so the original one is not overriden:

mkdir -p ~/.local/share/icons

cp -r /usr/share/icons/breeze_cursors ~/.local/share/icons/breeze_cursors.my

Then open ~/.local/share/icons/breeze_cursors.my/index.theme in the editor. Change the name in Name[_insert your locale_]= so you can tell it from the original in the cursor settings.

For example, if we want a size 36 cursor, and the display scale is 250%:

cd ~/.local/share/icons/breeze_cursors.my

rm -r cursors/

kcursorgen --svg-theme-to-xcursor --svg-dir=cursors_scalable --xcursor-dir=cursors --sizes=36 --scales=1,2.5,3

Some Wayland apps don't support fractional scaling, so they will round the scale up. So we need to include both 2.5 and 3 in the scale list.

The above command generates XCursor at size 36, 90 and 108. Note that the max size of the original Breeze theme is 72, so this is something not possible with the original theme.

(kcursorgen also adds paddings when necessary, to satisfy alignment requirements of some apps / toolkits. E.g., GTK3 requires cursor image sizes to be multiple of 3 when the display scale is 3. So please use --sizes=36 --scales=1,2.5,3, not --sizes=36,90,108 --scales=1, because only the former would consider alignments.)

Then you can go to systemsettings - cursor themes, select your new theme, and choose size 36 in the dropdown.

(Yes, you can have HUGE cursors without shaking. Size 240.)

As explained before, Breeze theme triggers a bug in GTK4 when global scaling is used, resulting in huge cursors. It's because Breeze's "nominal size" (24) is different from the image size (32).

We can work around this problem by changing the nominal size to 32.

Step 1 is same as above. Then we modify the metadata:

cd ~/.local/share/icons/breeze_cursors.my

find cursors_scalable/ -name 'metadata.json' -exec sed -i 's/"nominal_size": 24/"nominal_size": 32/g' '{}' \;

rm -r cursors/

kcursorgen --svg-theme-to-xcursor --svg-dir=cursors_scalable --xcursor-dir=cursors --sizes=32 --scales=1,1.5,2,2.5,3

Then you can go to systemsettings - cursor themes, select your new theme, and choose size 32 in the dropdown. Cursors in GTK4 apps should be fixed now.

It might be possible to only package the index.theme file and cursors_scalable directory for the Breeze cursor theme (and other SVG cursors themes), then in an postinstall script, use kcursorgen to generate the cursors directory on the user's machine.

This would greatly reduce the package size. And also you can generate more sizes without worrying about blown package size.

But the fact that kcursorgen is in the breeze package might make some dependency problems. I have an standalone Python script that does the same. (But it requires Python and PySide6.)