Friday, 13 March 2026

Friday, 13 March 2026

KDE today announces the release of KDE Frameworks 6.24.0.

This release is part of a series of planned monthly releases making improvements available to developers in a quick and predictable manner.

New in this version

KCodecs

- [KEncodingProber] Replace nsCharSetProber raw pointer with unique_ptr. Commit.

- [KEncodingProber] Replace SMModel external with internal linkage. Commit.

- [KEncodingProber] Replace nsCodingStateMachine raw pointer with unique_ptr. Commit.

- [KEncodingProber] Remove unused header files. Commit.

- [KCharsets] Remove no longer used include. Commit.

- [KCharsets] Verify entity table is sorted at build time. Commit.

- [KCharsets] Fix sort order in entity table. Commit.

- [KCharsets] Add benchmark for entity lookup. Commit.

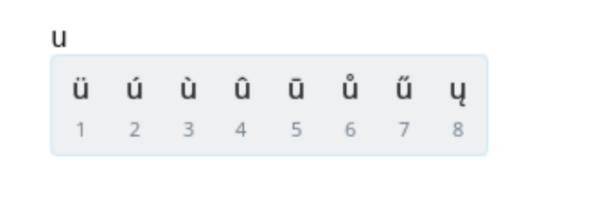

- [KCharSets] Specify the

fromEntityinput format more explicitly. Commit. - [KCharSets] Fix numeric encoding for

toEntity(...). Commit. - [KCharSetsTest] Move test class declaration to implementation file. Commit.

- [KEncodingProber] Switch state machine tables to plain uint8_t. Commit.

- [KEncodingProber] Remove runtime unpack state machine. Commit.

- [KEncodingProber] Use same class table for UTF16 BE and LE state models. Commit.

- [KEncodingProber] Fix reset() method. Commit.

- [KEncodingProber] Actually check if reset() works. Commit.

- [KEncodingProber] Reduce variable scope. Commit.

- Remember where to re-try RFC 2047 word decoding. Commit.

- [KEncodingProber] Default empty constructors/desctructors. Commit.

- [KEncodingProber] Remove unused unexported member functions. Commit.

- [KEncodingProber] Remove unused member variable. Commit.

- [KEncodingProber] Remove no longer used include. Commit.

- [KEncodingProber] Drop declaration of unused GetDistribution method. Commit.

KConfig

- Fix bounds check in KConfigPrivate::expandString. Commit.

- Kdesktopfile: do not needlessly cascade desktop files. Commit.

- Add oss-fuzz integration. Commit.

- Remove old, commented out code. Commit.

- With get_filename_component use DIRECTORY instead of legacy alias PATH. Commit.

- KDesktopFileTest: Update testActionGroup. Commit.

- KDesktopFile: Check for Name since it is required field. Commit. Fixes bug #515694

KCoreAddons

- Autotests: increased safety margins for unstable tests. Commit.

KGuiAddons

- Clipboard: Use buffered writes for data transfer. Commit.

- Add manual large clipboard tests. Commit.

- Ksysteminhibitor: Support Windows through PowerCreateRequest API. Commit.

- Remove unneeded Qt version check. Commit.

- Kiconutils: remove now unnecessary Qt version check. Commit.

- Clipboard: Hold mutex before dispatching any wayland events. Commit. Fixes bug #515465

- Mark WindowInsetsController as singleton in the documentation. Commit.

- CMake: Find Qt6::GuiPrivate when USE_DBUS is enabled. Commit.

- KKeySequenceRecorder: Accept some more keys that can be used with Shift. Commit.

KHolidays

- Add more holidays to Bulgaria. Commit.

- Quiet compiler warnings using inline pragmas. Commit.

- DE: From 1954 to 1990, there was a “Tag der deutschen Einheit” (note the lowercase “d” in “deutschen”) in West Germany. Commit.

- DE: Before 1990, there was no "Tag der Deutschen Einheit". Commit.

- DE: Before 1990, there was no "Tag der Deutschen Einheit". Commit.

- Generate the bison/flex code. Commit.

KImageformats

- JP2: fix possible Undefined-shift. Commit.

- IFF: fix buffer read overflow. Commit.

- Fix Heap-buffer-overflow WRITE. Commit.

- Fixed excessively frequent warning messages. Commit.

- Ossfuzz: update aom, libavif, openjpeg. Commit.

- ANI: fix possible QByteArray allocation exception. Commit.

- Jxl: adjust metadata size limits. Commit.

- RGB: fix a possible exception on the new. Commit.

- TGA: fix Undefined-shift. Commit.

- PSD: improve conversion sanity checks. Commit.

- IFF: fix compilation warnings. Commit.

- ANI: check for array allocation size. Commit.

KIO

- Refactor and improve paste dialogs. Commit.

- Add title for dialogs opened when pasting content. Commit.

- KNewFileMenu: Strip proper ellipsis, too. Commit.

- KFileItemDelegate. Commit.

- Trash: fix typo and use correct device id for home dev. Commit.

- Visual changes to KFileWidgets to bring it closer to Dolphin. Commit. Fixes bug #516063

- Widgets: Make use of nanosecond timestamps when appropriate. Commit.

- Workers: Populate nanosecond timestamps in KIO workers. Commit.

- Core: Extend UDSEntry with nanosecond precision timestamps. Commit.

- FilePreviewJob: Stat MountId and use it to look up the mount. Commit.

- KMountPoint: Add findByMountId. Commit.

- Filewidgets/placesview: add mountpoint tooltips for network mounts. Commit.

- KFileItemActions: Use OpenUrlJob::isExecutableFile for "Run executable". Commit.

- Kfileitemactions: Add i18n context inmenu. Commit.

- Trashimpl: use mnt_id as trashId instead of dev_id. Commit. Fixes bug #513350. Fixes bug #386104. See bug #490247

- Kmountpoint: expose mnt_id_unique and isPseudoFs. Commit.

- KFileItemActions: Add API for service menu keyboard shorcut. Commit.

- Drop Worker::workerProtocol. Commit.

- Kpropertiesdialog: Use MIME type we already have for default icon. Commit.

- Core: Drop unused code. Commit.

- Filepreviewjob: Add timeout. Commit. Fixes bug #504067

- Deleteortrashjob: Remember whether AutoErrorHandling was enabled. Commit.

- Deleteortrashjob: Don't overwrite job delegate, if it exists. Commit.

- Gui/openurljob: Stops job on missing Type in desktop files. Commit.

- Core/mimetypefinderjob: Fix missing early return in KIO::MimeTypeFinderJobPrivate::scanFileWithGet. Commit.

- Trashsizecache: Fix look up of directory size cache. Commit. See bug #434175

- Drop WorkerConfig::setConfigData. Commit.

- Simplify SimpleJob::slotMetaData. Commit.

- Drop special handling for internal metadata. Commit.

- Storedtransferjob: Drop secret OverriddenPorts option. Commit.

Kirigami

- Workaround crash due to QTBUG-144544. Commit. Fixes bug #514098

- Platform: Deprecate PlatformTheme::useAlternateBackgroundColor. Commit.

- Platform: Move useAlternateBackgroundColor from Theme to StyleHints. Commit.

- Platform: Introduce StyleHints as common API for extra style behaviour. Commit.

- InlineViewHeader: Create a template and utilize it. Commit.

- NavigationTab Bar/Button: Create templates. Commit.

- Heading: Create a template, base control on top of it. Commit.

- Mark QML singletons in documentation. Commit.

- Remove duplicate since documentation. Commit.

- Add missing documentation module dependency. Commit.

- Autotests: Mark test_absolutepath_recoloring of tst_icon.qml as skipped. Commit.

- Controls: Port kirigamicontrolsplugin to use Qt::StringLiterals. Commit.

- Controls: Use componentUrlForModule for looking up controls files. Commit.

- Platform: Port BasicTheme to use StyleSelector::componentUrlForModule. Commit.

- Platform: Greatly simplify StyleSelector::styleChain. Commit.

- Platform: Deprecate componentUrl, rootPath and resolveFileUrl in StyleSelector. Commit.

- Platform: Simplify StyleSelector::resolveFilePath. Commit.

- Platform: Add StyleSelector::componentUrlForModule. Commit.

- Move all controls in own import. Commit.

- Fix Kirigami.InputMethod.willShowOnActive. Commit.

- Fix placeholder. Commit.

- Reduce text duplication for shortcut tooltips in action toolbar. Commit. Fixes bug #515958

- Show keyboard shortcut in action toolbar tooltips. Commit.

- Work around Qt bug causing kirigami components to not load when multiple qml engines are involved. Commit.

KJobWidgets

- Don't show empty error notifications in KNotificationJobUIDelegate. Commit.

KService

- Make updateHash slightly faster. Commit.

- Ksycoca: do not allow for recursive repairs. Commit. Fixes bug #516426

- Kservice: correctly type the unused legacy field as 8 bits. Commit.

- Ksycocafactory: do not crash when failing to find a factory stream. Commit.

- Ksycocafactory: guard against integer underflow. Commit.

- Enable LSAN in CI. Commit.

- Fix KServiceAction+KService memory leak. Commit.

KTextEditor

- Search: add a way to clear the history. Commit. Fixes bug #503327

- Enable Werror on CI. Commit.

- Reduce QLatin1Char noise. Commit.

- Fix clipboard warning, check if QClipboard::Selection is supported. Commit.

- Ensure we write a BOM if wanted for empty files, too. Commit.

- Avoid temporary allocations when saving the file. Commit.

- Properly pass parent pointer for document. Commit.

KTextTemplate

- With get_filename_component use DIRECTORY instead of legacy alias PATH. Commit.

KUserFeedback

- Don't report nonsensical screen information. Commit.

KWidgetsAddons

- Kacceleratormanager: Avoid unnecessary allocations when searching for used shortcuts. Commit.

- Kactionmenu: Be more forgiving about menu ownership. Commit.

- Kactionmenu: Fix ownership of default menu. Commit.

- Enable LSAN in CI. Commit.

- Avoid mem-leak in KDateTimeEditTest::testDateMenu. Commit.

- KAcceleratorManager: Avoid unnecessary allocation. Commit.

- Kmimetypechooser.h: Remove default arguments from an overlapping constructor and mark it as deprecated for 6.24. Commit.

KWindowSystem

- Remove unused include. Commit.

- Port createRegion to QNativeInterface. Commit.

- Port surfaceForWindow to QNativeInterface. Commit.

- Wayland: Don't try to export window that isn't xdg_toplevel. Commit. Fixes bug #516994

- Port xdgToplevelForWindow to QNativeInterface. Commit.

- Wayland: Fix importing window that's not exposed but already has surface role. Commit.

- Remove unneeded Qt version check. Commit.

- Platforms/wayland: Manage blur, contrast, and slide globals with std::unique_ptr. Commit.

- Platforms/wayland: Add missing initialize(). Commit.

- Platforms/wayland: add missing blur capability with ext-background-effect. Commit.

- Wayland: implement background effect protocol. Commit.

- Platforms/wayland: ensure we always react to surface destruction. Commit.

- Run clang-format on all files. Commit.

Modem Manager Qt

- Add new cell broadcast interfaces from MM API. Commit.

- Update modem firmware interface to latest MM API. Commit.

- Update modem voice interface to latest MM API. Commit.

- Update call interface to latest MM API. Commit.

- Update modem interface to latest MM API. Commit.

- Update bearer interface to latest MM API. Commit.

- Update modem messaging interface to latest MM API. Commit.

- Update modem signal interface to latest MM API. Commit.

- Update modem location interface to latest MM API. Commit.

QQC2 Desktop Style

- Use Kirigami.StyleHints for tick marks in Slider. Commit.

- Use Kirigami.StyleHints for useAlternateBackgroundColor in list item background. Commit.

- Use Kirigami.StyleHints for icons in ComboBox. Commit.

- Use Kirigami.StyleHints to determine ScrollView background visibility. Commit.

- Menu: make sure implicitWidth/height is never 0. Commit. Fixes bug #516151

- Make sure that the default behaviours of onPressed and onLongPressed are not trigger if the even is accepted. Commit.

@merritt:kde.org

@merritt:kde.org